As emergent, conversational Artificial Intelligence (AI) systems become increasingly embedded in our daily lives, the paradigm of human-computer interaction is shifting dramatically. We are no longer simply using tools; we are interacting with entities that simulate human conversation, reasoning, and empathy. For platforms like Formal Psychology, understanding the Psychology of Artificial Intelligence is critical. It forces us to examine how these systems impact human cognition, social behavior, and the complex variables that govern trust.

This article delves into the socio-cognitive implications, trust dynamics, and the evolving nature of human-technology interactions driven by modern conversational AI.

The Socio-Cognitive Implications of Conversational AI

When humans interact with sophisticated AI, it triggers deeply ingrained socio-cognitive processes. Our brains are hardwired for social connection, and conversational AI systems are explicitly designed to mimic the cues that trigger these responses.

1. The ELIZA Effect and Anthropomorphism

The tendency to attribute human traits, emotions, and intentions to non-human entities is known as anthropomorphism. In the context of AI, this is often referred to as the “ELIZA effect”—named after the 1960s chatbot that users quickly developed emotional attachments to, despite its simple programming.

When a conversational AI uses natural language, pauses, or empathetic phrasing, users naturally project a “Theory of Mind” onto the system. They subconsciously begin to treat the AI not as software, but as a social agent. This socio-cognitive mirroring can lead to profound emotional connections, but also to significant misunderstandings regarding the AI’s actual capabilities and sentience.

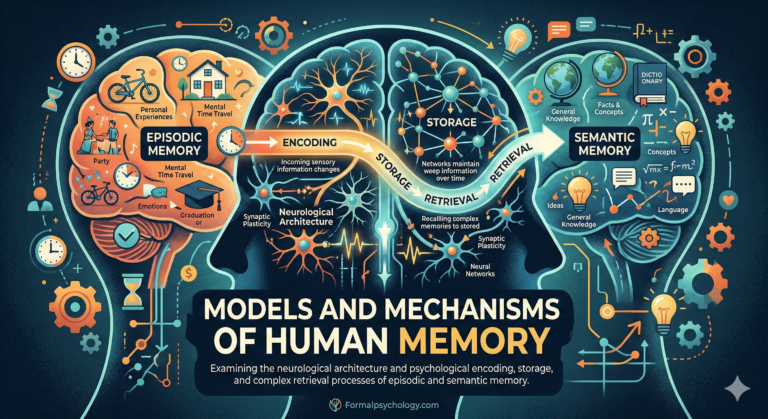

2. Cognitive Offloading and Human Memory

With highly capable AI systems readily available to answer questions, draft text, and solve problems, humans increasingly engage in “cognitive offloading.” This is the psychological process of relying on external environments (in this case, AI) to reduce our own cognitive demand.

While cognitive offloading frees up mental bandwidth for higher-level thinking, continuous reliance on AI can impact memory retention, analytical skills, and independent problem-solving abilities. The psychological implication is a shift in how we process information—moving from “knowing the answer” to “knowing how to ask the AI.”

Trust Variables in Human-AI Interaction

Trust is the fundamental currency of any relationship, including the human-AI relationship. In the psychology of artificial intelligence, trust is not static; it is a dynamic variable influenced by several key psychological factors.

1. Algorithmic Aversion vs. Algorithmic Appreciation

Psychological research reveals a dichotomy in how humans trust machines:

- Algorithmic Aversion: The tendency for humans to lose confidence in an algorithm more quickly than in a human after seeing it make a mistake, even if the algorithm generally outperforms humans.

- Algorithmic Appreciation: Conversely, when users seek objective, data-driven advice (like financial forecasting or medical diagnostics), they may display algorithmic appreciation, trusting the machine more than human judgment due to a perceived lack of human bias.

2. Transparency and Explainability

Cognitive trust heavily relies on predictability and understanding. When conversational AI acts as a “black box”—providing answers without revealing how those conclusions were reached—user trust remains fragile. Psychological comfort increases when AI systems offer “explainability,” allowing the user to map the system’s simulated reasoning process to their own cognitive frameworks.

3. Emulated Warmth and Competence

In human social psychology, we judge others based on two primary dimensions: warmth (intentions toward us) and competence (ability to carry out those intentions).

Conversational AI is engineered to project both. By using polite, accommodating language, AI simulates “warmth.” By retrieving vast amounts of data instantly, it demonstrates “competence.” This combination artificially fast-tracks the trust-building process, sometimes leading to over-reliance or unwarranted vulnerability on the part of the human user.

The Future of Human-Technology Interaction

The shift from command-line interfaces to conversational AI represents a move from unilateral direction to bilateral negotiation. We are entering an era of human-machine symbiosis.

- The Parasocial Phenomenon: Just as individuals develop one-sided relationships with celebrities or fictional characters (parasocial relationships), users are forming similar bonds with AI assistants. These interactions fulfill basic needs for communication and validation without the social friction inherent in human relationships.

- Identity and Self-Perception: As AI becomes a sounding board for our thoughts, it begins to act as a mirror. How an AI responds to a user’s prompts can subtly reinforce or challenge the user’s beliefs, attitudes, and self-perception, acting as an invisible hand in human identity formation.

Conclusion

The Psychology of Artificial Intelligence proves that interacting with emergent, conversational AI is far more than a technological exchange; it is a profound psychological event. As these systems become more integrated into our cognitive processes and social lives, understanding the socio-cognitive implications and trust variables becomes paramount. For students, researchers, and readers of Formal Psychology, observing this space offers a real-time view into the evolution of human cognition and social behavior in the digital age.